Faculty Members

Lucia Pallottino |

Post-Docs

Danilo Caporale |

Alessandro Settimi (Ph.D. XXIX Cycle) |

Ph.D. Students

Anna Mannucci (XXXII Cycle) |

Chiara Gabellieri (XXXIII Cycle) |

Federico Massa (XXXIII Cycle) |

Former Ph.D. Students

Vincenzo Scordio (XVIII Cycle) Currently Industrial Automation Consultant and Contractor, Main Axis

Paolo Salaris (XXIII Cycle) Currently Inria researcher (CR2) in the Lagadic Team, Sophia Antipolis, France

Mirko Ferrati (XXVIII Cycle) Currently Automated Driving Team Leader Magazino GmbH

Alessandro Settimi (XXIX Cycle) Currently Post-Doc at Centro "E. Piaggio" Università di Pisa

Simone Nardi (XXVII) Currently Senior System Analyst presso IDS Ingegneria Dei Sistemi S.p.A.

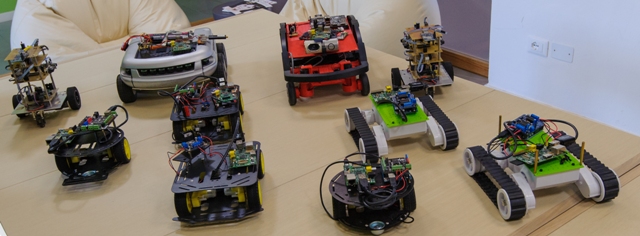

Autonomous vehicles

Autonomous driving for cars and robotics vehicles (wheeled and aerial) is gaining a lot of interest in several application domains from intelligent transportation systems to logistics in industrial environment.

Map reconstruction, localization (indoor and outdoor), planning and control are fundamental challenges to be faced in order to develop advanced solutions. The group has developed navigation systems for both aerial and wheeled robots in both indoor and outdoor environment.

Abovementioned issues are critical in high speed scenarios where real-time constraints must be taken into account.

Multi Agent Systems

Cooperative Behavior: Conflict resolution in multi-vehicle systems, establishment of collision free routing, deadlock avoidance and coordination for autonomous or semi-autonomous (e.g., human-piloted) vehicles in busy areas.

The standard solution adopted for multi-vehicle coordination is a centralized approaches but they are are usually limited to simple problem settings involving few vehicles. In order to increase performances of the system a decentralized coordination algorithm for a safe, secure and efficient coordination of a multi-vehicle system in an industrial automation environment, has been developed in the Interdepartmental Research Center “E. Piaggio” of the University of Pisa. The decentralized coordination algorithm collectively allows multiple autonomous mobile robots to travel through a discrete traffic network composed of passage segments, intersection, and terminals, all of which are shared, discrete resources. Each mobile robots, exchanging data through limited inter-robot communication, cooperate with their neighbors to menage secure access to shared resources. In particular, the limited capacity of passage segments and terminals is always respected, no collision occurs at any intersection and deadlocks are avoided.

Intrusion Detection: the actual achievement of a system’s goal is normally guaranteed only if every agent harmoniously act and cooperate, whilst the whole system might be at risk if some of them deviate from specification. Uncooperative agents, referred to as intruders, are normally discouraged and logically excluded from the system by means of an Intrusion Detection System (IDS). Detecting misbehaving agents in a robotic system is more complicated, since agents have only partial knowledge of the system’s state, which requires the construction of local estimates of the missing information. The challenge here is to find strategies to detect and isolate possible intruders, without the use of any form of centralization, which requires understanding what level of intelligence must be embedded in the automation component to provide satisfactory guarantees of performance, while remaining economically viable. The IDS is based on a local monitor by using which every agent can try to establish if its neighbors are misbehaving, only using local information, and an agreement mechanism by using which monitors can share locally reconstructed data through communication and consent on a unique global view. To achieve this, simple linear consensus algorithms are not applicable, as they work on real values, while the output from a local monitor represents continuous regions where the presence or the absence of an agent is required. Thus, we are developing a generalization of the concept, so-called Boolean consensus where agents are able to agree on other data structures.

Species Recognition: We consider societies of autonomous, possibly heterogeneous agents that act based on interaction rules depending on local views, and cooperate to achieve a common goal. The interaction often requires identification of similar and/or different individuals; similar behaviors are reducible to the same species, enclosing individuals with similar dynamics and interaction rules. Every agent is required to classify its neighbors by using its sensory system and an agreement mechanism that allows them to combine their views and reach a unique global decision on the classification. The species recognition has general applicability such as multi-agent systems from Biology and Robotics. We can consider an highway, where the drivers obey to different driving rules, such as Italian drivers that follow right-hand traffic rules and pass on left, English drivers that follow left-hand traffic rules and pass on right and SOS drivers adopt either rules at convenience. Every vehicle must classify its neighbors to guarantee safety based on local sensors and through consensus.

Species Recognition: We consider societies of autonomous, possibly heterogeneous agents that act based on interaction rules depending on local views, and cooperate to achieve a common goal. The interaction often requires identification of similar and/or different individuals; similar behaviors are reducible to the same species, enclosing individuals with similar dynamics and interaction rules. Every agent is required to classify its neighbors by using its sensory system and an agreement mechanism that allows them to combine their views and reach a unique global decision on the classification. The species recognition has general applicability such as multi-agent systems from Biology and Robotics. We can consider an highway, where the drivers obey to different driving rules, such as Italian drivers that follow right-hand traffic rules and pass on left, English drivers that follow left-hand traffic rules and pass on right and SOS drivers adopt either rules at convenience. Every vehicle must classify its neighbors to guarantee safety based on local sensors and through consensus.

Visual Servoing

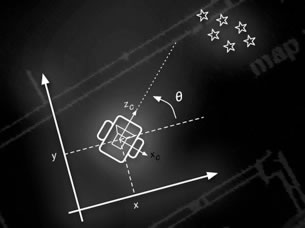

Visual Slam: Simultaneous Localization and Mapping (SLAM) is a concept in which a mobile robot or an autonomous vehicle builds a map of an unknown environment while keeping track of its position and localizing itself. As vision systems can provide plentiful information about the environment, so they can provide a rich framework to retrieve scene landmarks. This is particularly true for problems concerning robot motion and localization as they can be augmented by visual sensors, or even be completely visual based. Purely appearance based visual maps can be constructed without considering any 3D spatial information and, even though, be used for robot localization. Our approach on mapping and localization is focused on studying the VSLAM problem when only monocular images are available as sensory data. As we consider the problem of not using odometry data, we are presently studying the systems problem called“Unknown Input Observability and Observers”in the context of VSLAM. Appearance Based VSLAM, Visual Based Control and the problems that emerge from these studies are under currently investigation in Centro Piaggio.

Visual Slam: Simultaneous Localization and Mapping (SLAM) is a concept in which a mobile robot or an autonomous vehicle builds a map of an unknown environment while keeping track of its position and localizing itself. As vision systems can provide plentiful information about the environment, so they can provide a rich framework to retrieve scene landmarks. This is particularly true for problems concerning robot motion and localization as they can be augmented by visual sensors, or even be completely visual based. Purely appearance based visual maps can be constructed without considering any 3D spatial information and, even though, be used for robot localization. Our approach on mapping and localization is focused on studying the VSLAM problem when only monocular images are available as sensory data. As we consider the problem of not using odometry data, we are presently studying the systems problem called“Unknown Input Observability and Observers”in the context of VSLAM. Appearance Based VSLAM, Visual Based Control and the problems that emerge from these studies are under currently investigation in Centro Piaggio.

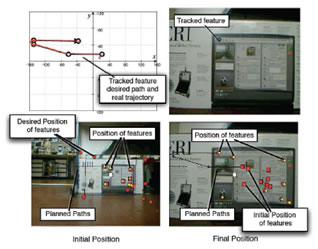

Visual Based Control: Visual servoing techniques use visual information directly, by the computation of an image error signal (Image–Based (IBVS) approach), or indirectly, by the evaluation of the state of the system (Position–Based (PBVS) approach). Using the PBVS approach, for instance, the control law can be synthesized in the usual working coordinates for the robot, usually making the synthesis simpler. On the other hand, IBVS and other sensor-level control schemes have also several advantages, such as robustness (or even insensitivity) to modeling errors and hence suitability to unstructured scenes and environments.

However, few practical problems still affect visual servoing approaches and depend on the particular available robotic set-up. One such issue arising with limited field-of-view (FOV) cameras is that of keeping the features in view during the robot manoeuvres. We have successfully solved the FOV problem for a unicycle–like vehicle in a PBVS scheme by using exclusively information from conventional cameras fixed on-board and by adopting a switching strategy, whose convergence is proven by modern hybrid control techniques. Indeed, the visual-based parking problem of a differentially driven robot keeping all features in view during maneuvers becomes very challenging when the path from any initial position to target position, both on the motion plane of the robot, have to be optimal. We have solved the problem in a purely IBVS scheme translating all optimal 3D paths (shortest ones) in paths on the image plane and providing a procedure to decide which is the optimal path to be applied for any given initial image. Feedback control along optimal paths in the image plane has been obtained in a IBVS scheme, whose design relies on a set of Lyapunov controllers.

However, few practical problems still affect visual servoing approaches and depend on the particular available robotic set-up. One such issue arising with limited field-of-view (FOV) cameras is that of keeping the features in view during the robot manoeuvres. We have successfully solved the FOV problem for a unicycle–like vehicle in a PBVS scheme by using exclusively information from conventional cameras fixed on-board and by adopting a switching strategy, whose convergence is proven by modern hybrid control techniques. Indeed, the visual-based parking problem of a differentially driven robot keeping all features in view during maneuvers becomes very challenging when the path from any initial position to target position, both on the motion plane of the robot, have to be optimal. We have solved the problem in a purely IBVS scheme translating all optimal 3D paths (shortest ones) in paths on the image plane and providing a procedure to decide which is the optimal path to be applied for any given initial image. Feedback control along optimal paths in the image plane has been obtained in a IBVS scheme, whose design relies on a set of Lyapunov controllers.

Constrained Optimal Planning

Optimal Synthesis with Visual Constraint: The control of visually guided nonholonomic robots has received considerable attention recently. Nevertheless, few practical problems still affect visual servoing approaches and depend on the particular available robotic set-up.

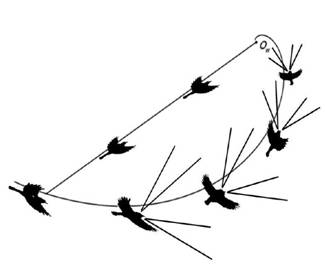

One such issue arising with limited field-of-view (FOV) cameras is that of keeping the features in view during the robot maneuvers. Moreover, the visual-based parking problem of a nonholonomic robot, i.e. a differential drive robot (DDR), keeping all features in view during maneuvers becomes very challenging when the path from any initial position to target position, both on the motion plane of the robot, have to be optimal. We have studied the problem of shortest paths for a directed point moving in a plane (i.e. a DDR) to reach a target position while making so that a point fixed in the plane is kept inside a cone moving with the point. The point moves subject to the constraint that its instantaneous velocity is aligned with its direction. The solution of this study is a complete optimal synthesis, i.e. a language of optimal control words, and a global partition of the motion plane induced by shortest paths, such that a word in the optimal language is univocally associated to a region and completely describes the shortest path from any starting point in that region to the goal point. An inspiring motivation for the study, however, comes from the naturalistic observation of paths followed by raptors during hunting activities. In the literature, it has been shown that raptors move along a logarithmic spiral path with their head straight and one eye looking sideways at the prey, rather than following the straight path to the prey with their head turned sideways.

One such issue arising with limited field-of-view (FOV) cameras is that of keeping the features in view during the robot maneuvers. Moreover, the visual-based parking problem of a nonholonomic robot, i.e. a differential drive robot (DDR), keeping all features in view during maneuvers becomes very challenging when the path from any initial position to target position, both on the motion plane of the robot, have to be optimal. We have studied the problem of shortest paths for a directed point moving in a plane (i.e. a DDR) to reach a target position while making so that a point fixed in the plane is kept inside a cone moving with the point. The point moves subject to the constraint that its instantaneous velocity is aligned with its direction. The solution of this study is a complete optimal synthesis, i.e. a language of optimal control words, and a global partition of the motion plane induced by shortest paths, such that a word in the optimal language is univocally associated to a region and completely describes the shortest path from any starting point in that region to the goal point. An inspiring motivation for the study, however, comes from the naturalistic observation of paths followed by raptors during hunting activities. In the literature, it has been shown that raptors move along a logarithmic spiral path with their head straight and one eye looking sideways at the prey, rather than following the straight path to the prey with their head turned sideways.